A digital nomad with a flair for aesthetics. Leveraging media and communication background to craft engaging and socially responsible human experiences along with visual narratives.

Credibility Review Board (CRB)

A service designed to combat misinformation and empower you to make informed decisions

The overwhelming amount of content we encounter daily, and our scarce attention span make it challenging to fact-check every piece of information we come across online. Research says the primary driving force behind whether someone will share a piece of information is not its accuracy or content but because it comes from a friend or a celebrity with whom we want to be associated. The Credibility Review Board, or CRB is a decentralized community of volunteers who review flagged posts and provide diverse perspectives. It taps into collective intelligence to assess the accuracy of suspicious content.

Roles

I assumed the following roles in designing this service:

- Service Designer

- User Experience Designer

- Interaction Designer

- User Interface Designer

- Visual Designer

Deliverables

I assumed the following roles in designing this service:

Interaction Design

- High-fidelity interactive prototypes on the web and iOS.

UX/UI + Service Design

- Secondary Research

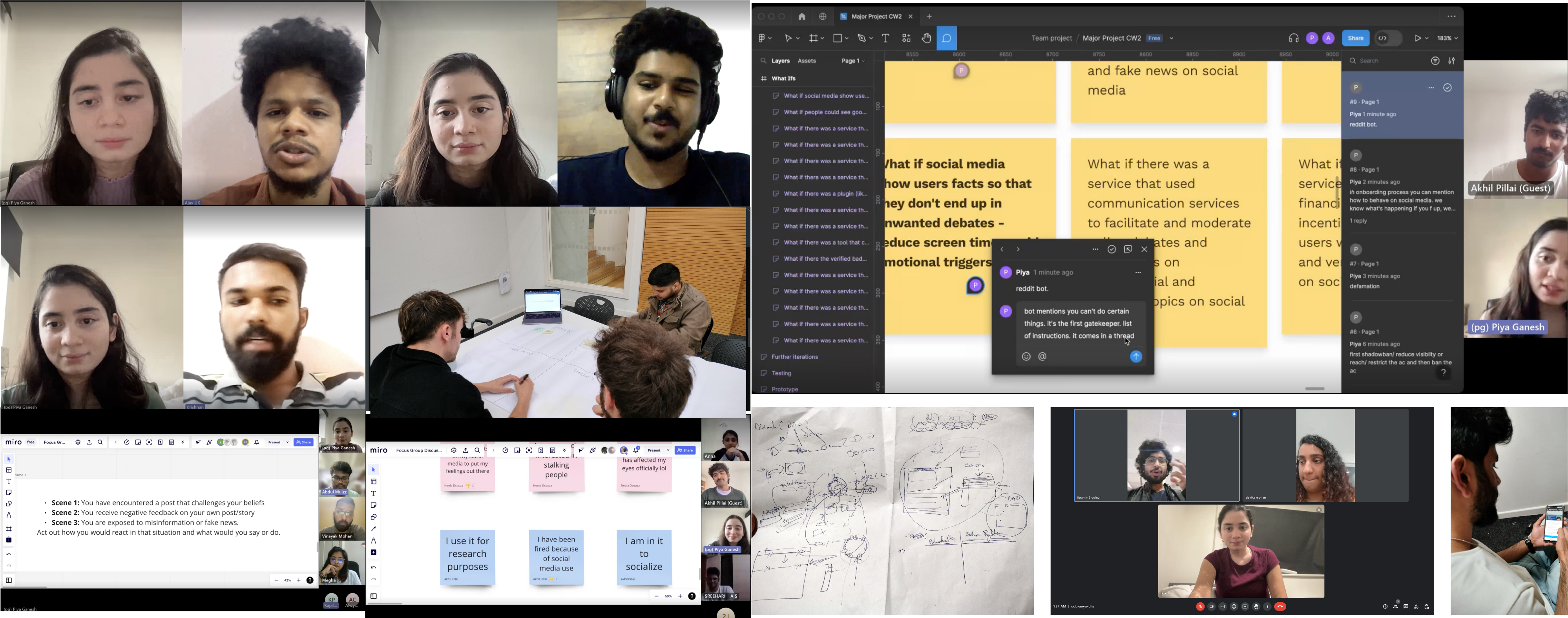

- One-on-one interviews and Focus group discussions

- Data Distillation

- Personas

- Storyboard

- Concept Generation

- Designing a service/solution for the problem

- User Testing Video

Project Specifications

Duration: 12 weeks

Tools

- Figma

- Miro

- Illustrator

- Premier Pro

Overview

The design features of social media platforms, such as notifications, personalized recommendations, infinite scrolling, and pull to refresh, are intentionally designed to manipulate human behaviour, and keep users engaged with the platform.

Facebook discovered that they could affect real-world behaviour and emotions without triggering users’ awareness.

Algorithms promote content that sparks outrage, and hate, and amplifies biases within the data we feed them. 64% of people who joined extremist groups on Facebook did so because of algorithms.

Surveillance-based advertising amplifies unrest and fuels political divisions due to misinformation and hate speech. Adding each word of moral outrage to a tweet increases the rate of retweets by 17%, further fuelling polarisation. Please note that the references provided are for further reading and to support the statements made.

Problems

- Information Overload: Users often encounter an overwhelming amount of information on social media, making it difficult to discern what’s true and what’s not.

- Sharing Misinformation: Studies have found that users’ social media habits can lead to the sharing of fake news, sometimes even more than political beliefs or lack of critical reasoning.

- Trust Issues: The prevalence of fake news can undermine the legitimacy of established organizations and become a barrier to communicating essential information during times of crisis.

- Social Media Fatigue: Increased use of social media platforms for news consumption can lead to social media fatigue, which in turn may make users more likely to share fake news.

Research

Findings

My research first centred around the influence of technology in altering user behaviour on social media and tried to understand the problems and platforms’ efforts to address various issues. To get a first-hand experience with the issue, I conducted one-on-one interviews and focus group discussions to understand what users feel about algorithms, filter bubbles, political polarisation, moral outrage misinformation and fake news, and what they’re hoping to resolve. It also helped me understand users’ journeys and specific issues that needed to be addressed.

- Participants mentioned they lacked the knowledge or weren’t aware of how to use and control when they started using social media. They no longer care about social validation; while followers, likes, and share doesn’t matter anymore, some mentioned if people had a similar opinion, it would reaffirm and validate their beliefs.

- Cancel culture works both ways. It is temporary and doesn’t solve problems but reduces the impact. Users feel calling it out is a fight against misinformation. Some users feel social media creates an illusion of having a voice, but popular opinions matter more. Education and exposure are key to forming rational opinions.

- Criticism of sensitive topics can lead to emotional detachment; some groups use it unfairly, and Facebook normalises dissecting beliefs. Those who took a break from social media were emotionally down due to the toxicity they experienced on social media or wanted to focus on other tasks.

- Users feel misinformation and fake news are unending menace. They want to clear the exposure to hate speech and distortion of facts. Invest in content moderation and help users seek perspectives to make informed decisions.

UX Vision Statement

I believe there is an opportunity to design a service for social media users who want to make informed decisions and feel confident, empowered, and responsible BUT are frustrated and confused by the fake news and misinformation and the time-consuming and overwhelming nature of fact-checking.

Design Principles

- Empowerment: Enable users to make informed decisions about content by equipping them with knowledge and resources to assess credibility and combat misinformation.

- Responsibility: Promote mindful digital citizenship by incentivising civil discourse, implementing friction for harmful speech, and fostering communities of trust while differentiating between satires/jokes and actual fake news.

- Seamlessness: Provide users with real-time assistance within their existing social media flows to minimise disruptions and reduce time spent fact-checking.

Defining key differences in motivations through personas

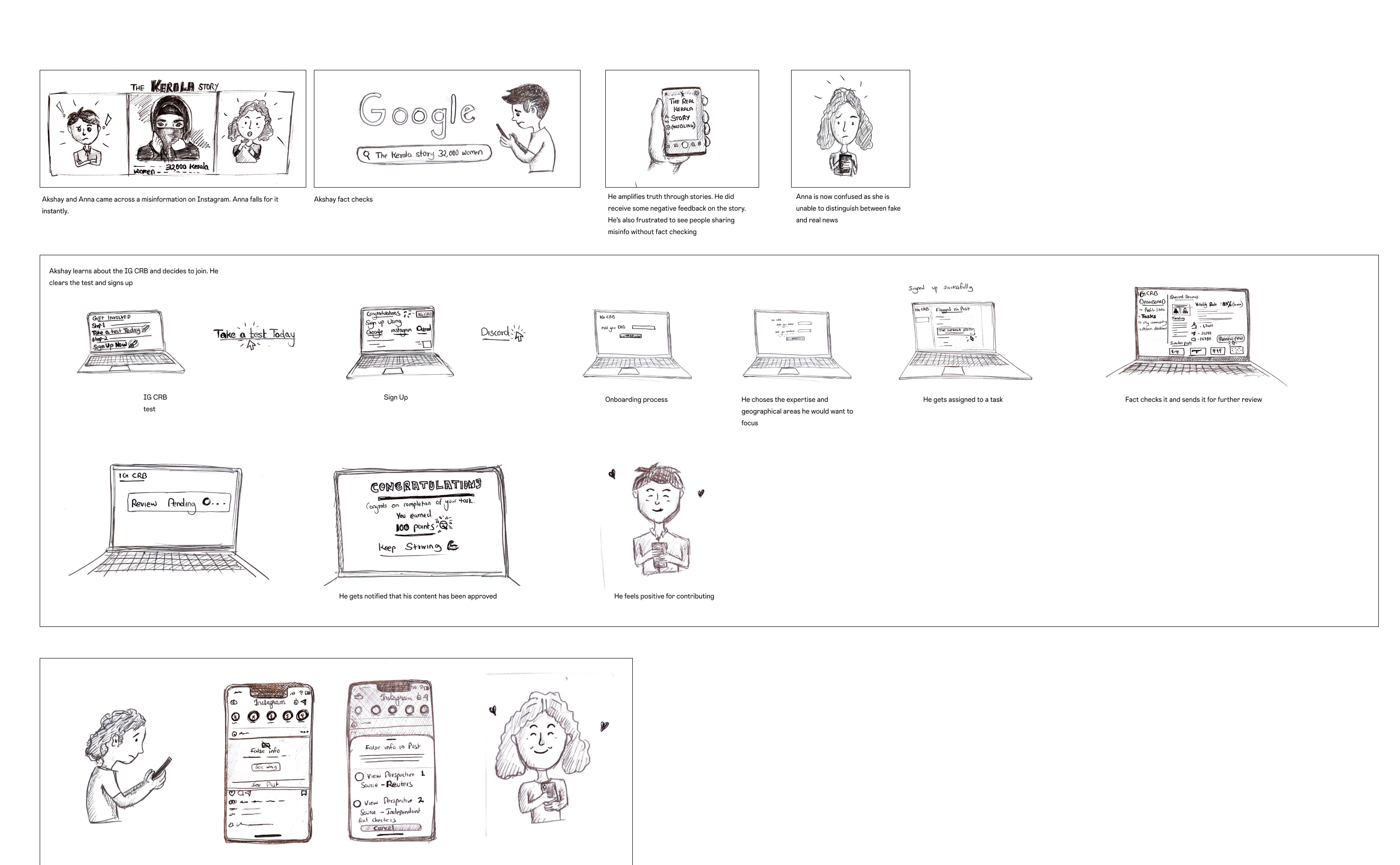

Initially, it seemed that my target users were the same, but upon closer inspection, the user research and empathy map made clear that there were divergent motivations and behaviours. Creating personas helped bring some clarity to those divergences, which would become important reference points in the design stage. As research and design proceeded, I focused primarily on two personas because they represented heavy emphasis on two key behaviours: the one who fact-checks before sharing and makes informed decisions and the one who can easily be misled and finds it difficult to differentiate between fake and real news.

Exploring common tasks in order to strengthen user empathy

By creating and exploring the empathy maps, a user journey through a storyboard of two personas and their typical tasks, I uncovered key emotional moments that IG CRB and extended features needed to address.

The empathy map outlined emotional pains and gains around misinformation on social media to guide solutions. For example, the frustration of misinformation and the desire to make informed decisions.

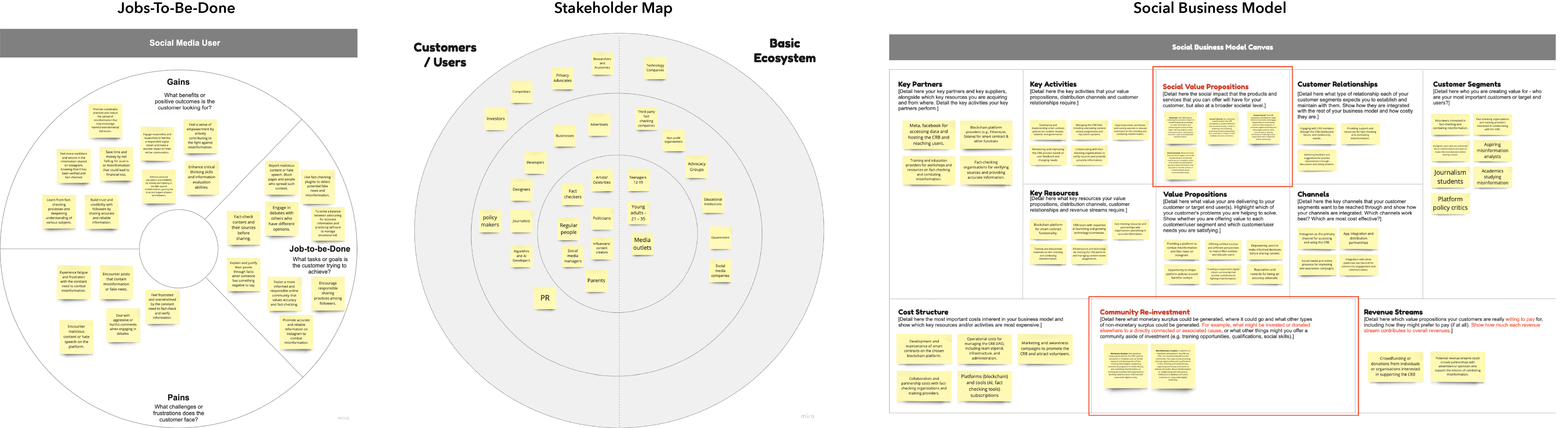

Storyboard visualised user flows early in the design process and tested with users. Further to this, the stakeholder map identified key stakeholders involved in implementing and participating in the CRB. Jobs-to-be-done uncovered user goals and motivations around combating misinformation.

Creating structure

It’s a B2C-B2B model as it directly targets Instagram users who are concerned about misinformation and want to make informed decisions before sharing content and it also involves partnerships and collaborations with other organisations.

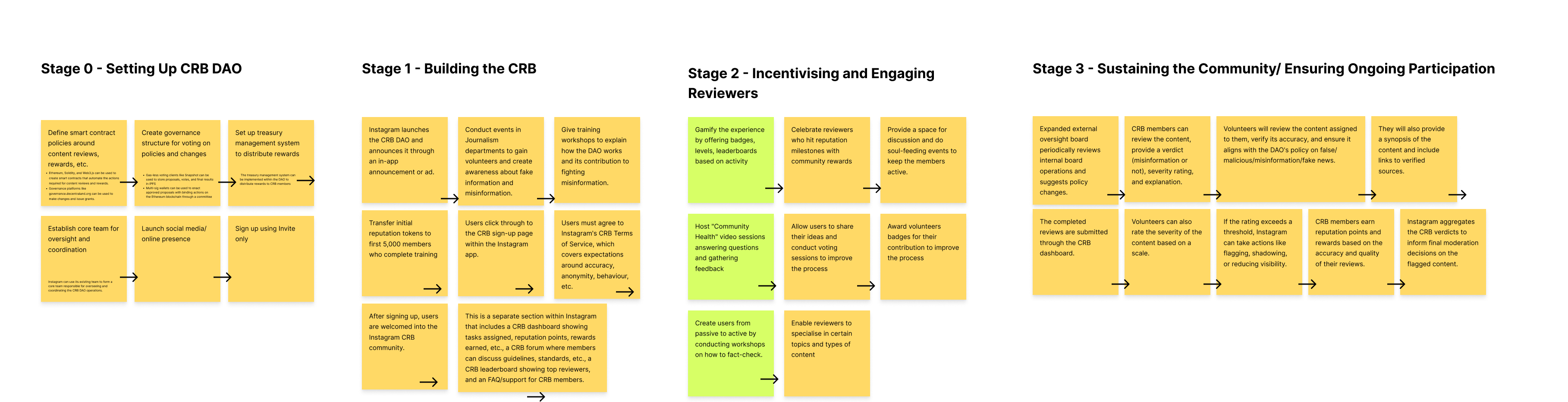

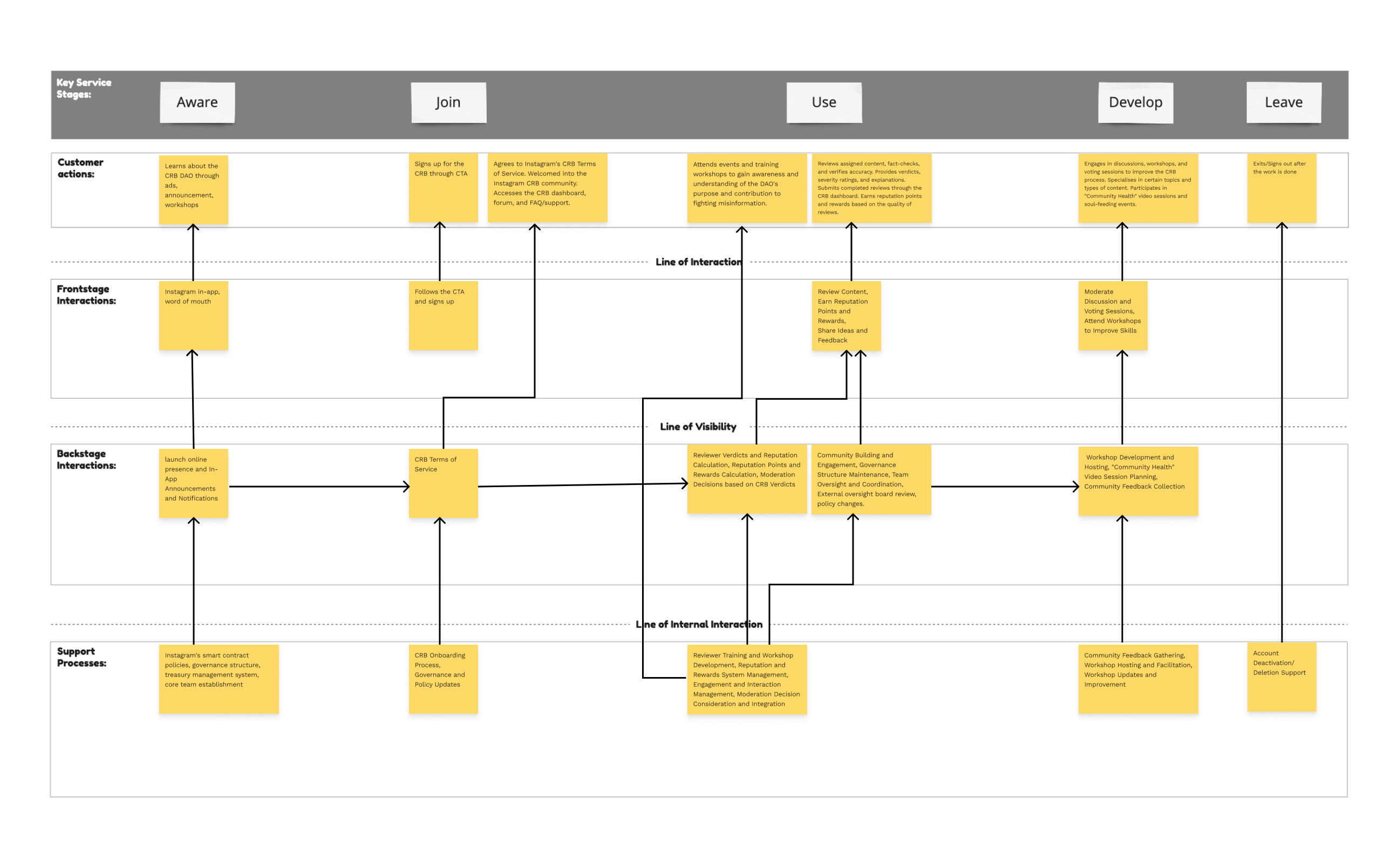

The Phase Gate process, guided by co-design/co-creation sessions, depicts the key tasks that the high-fidelity prototype would focus on

- Setting up the CRB (smart contracts, governance structure, core team)

- Onboarding and registration (screen test, transparency with the T&C)

- Incentivising and Engaging Reviewers (Gamify – badges, levels, leaderboards; workshops)

- Sustaining the community (Policy changes, involve participation)

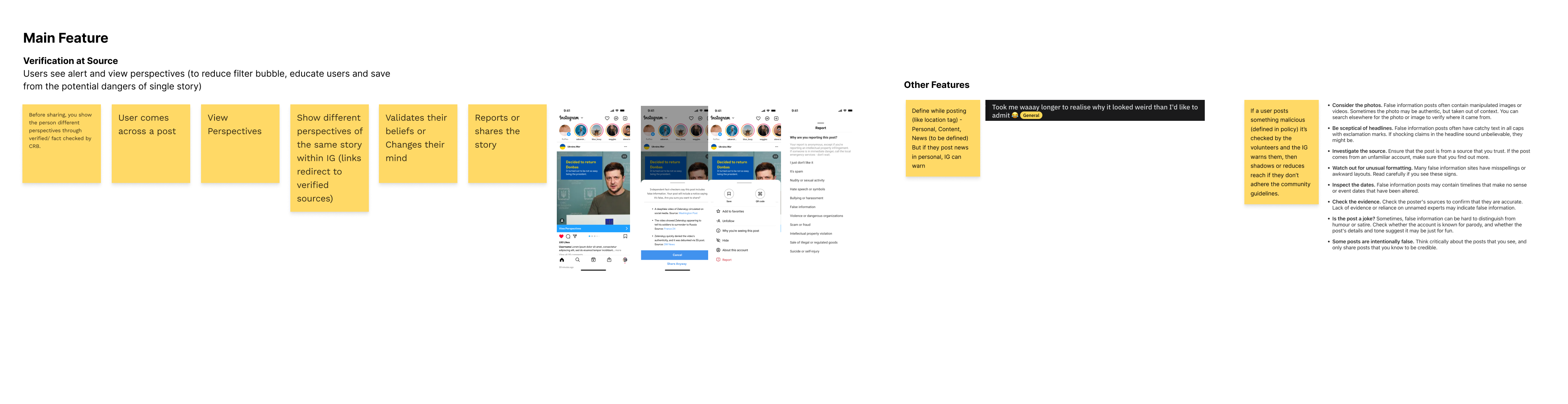

- IG embeds the reviewed content in “various perspectives” within the posts.

- IG users view multiple perspectives to make informed decisions. This may help users from potential fake news and misinformation and falling into an echo chamber.

Creating What Ifs and testing them with users through co-design/co-creation sessions helped in iterations and finessing the concept. The phase gate process along with the user insight collected, guided design decisions moving forward. Secondary research provided knowledge of existing industry approaches to build on.

Visualising a user-centric experience

Since the service is for Instagram, the UI has been followed as per the platform’s brand guidelines.

- The CRB seamlessly integrates into the Instagram experience through a dedicated website portal. This portal allows users to sign up, view policies, access their review dashboard, and join the CRB Discord community.

- Displaying transparent CRB policies during onboarding establishes trust and expectations around participation. Users understand the rules of the road up front.

- Once onboarded, the streamlined dashboard enables reviewers to find and claim content to analyse quickly. Review status and reputation metrics provide ongoing performance insights.

- For community building and discussions, the CRB Discord creates a space for members to workshop guidelines, swap fact-checking tips and foster connections.

- The service blends seamlessly into users’ existing flows by linking the external CRB website directly to Instagram—core components like policies, reviews, and community arm members to effectively scrutinise suspected posts.

Concerns

Reviews could be manipulated by bad actors misrating content, undermining credibility.

How to Tackle?

- The training program includes modules on identifying and mitigating biases.

- Reviewers are taught to recognize common cognitive biases Reviews are randomly assigned to reviewers with different backgrounds to maximize diversity of perspectives.

- Reviewers must provide reasoning and cite credible sources backing their verdicts. This creates accountability.

- An AI assistant provides warnings if review language exhibits possible bias. Reviewers can modify before submitting. Reviews with very high or very low bias ratings will be flagged for further scrutiny by moderators.

- Reviews are aggregated from multiple reviewers and moderators before finalizing rating decisions. Consensus overrides outliers.

- Moderators perform audits, warn reviewers exhibiting chronic bias, and remove them if bias persists after remediation.

Key Takeaways

Coming from a media and communication design background, my mindset was focused on visual appeal and aesthetics. This project taught me that human-centred design requires prioritizing people’s needs and experiences first. Through the course’s participatory design tools, I learned to map complex ecosystem relationships between stakeholders like reviewers, platforms, and fact-checkers. Co-creation sessions sparked innovative ideas by incorporating diverse perspectives. A key lesson was recognising the complexity of addressing misinformation. There are no magic bullet solutions. Effective social systems require thoughtful interpretation of incentives, governance, transparency, and trust-building. My mindset shifted from delivering assets to advocating for people navigating information ecosystems. I now focus on crafting experiences that empower, not exploit. Sustainability involves considering multiple stakeholder motivations and goals. This project reinforced that solving systemic issues requires mapping interconnected dynamics between people, communities, and institutions. I aim to apply these lenses to design seamless, ethical experiences that connect on a human level. There are always opportunities to positively impact people by listening first and creating responsibly.

Awards

Ford Motor Company Fund Smart Mobility Challenge 2022 – Winner

Work Experience

Graphic Designer

F1 Studioz, Bangalore, India (Remote) – Sept 2021 – Sept 2022

Graphic Designer

Moshi Moshi Media – Bangalore, India – Mar 2021 – Sept 2021

Freelance Graphic Designer

Production House, NGO, Restaurant (Remote) – Aug 2019 – Feb 2021

Graphic Design Intern

Freshly Picked (Remote) – Aug 2020 – Nov 2020

Visionary Thinkers

Visionary Creators

Visionary Makers